Agentic AI is changing how we interact with computers every day. Here is what that actually means for the way you run your team.

Better answers from our AI models is no longer what drives major shifts in our relationships with them. What truly matters in 2026 is who carries the sequencing of work.

Throughout our careers, we have been the ones to carry the sequencing of work. We open the tab, click through the menus, pull the data, paste it somewhere else, and start over. The software was increasingly powerful, but we dictated the rhythm of work because we were the operators.

Agentic AI is changing that arrangement.

For business leaders trying to figure out what AI actually means for their teams and their daily work, this shift is so big that it probably breaks their ability to predict how life as a business leader will look in 5 or 10 years. And honestly speaking, no one has a crystal ball. However, there are trends that are impossible not to spot from our perspective as people deeply involved with AI, businesses and technology. Here is some food for thought for you.

From Tool-Based to Intent-Based: What Actually Changed

In order to understand why we make these predictions, it is important to get on the same page regarding the history of computing and the relationship between computers and their human overlords.

Command line, graphical interface, web browser, mobile app. The interface kept improving, but the underlying contract stayed the same. The human plans. The computer executes bounded steps. In other words, you hold the context in your head, navigate across siloed tools, and manually carry information from one place to the next.

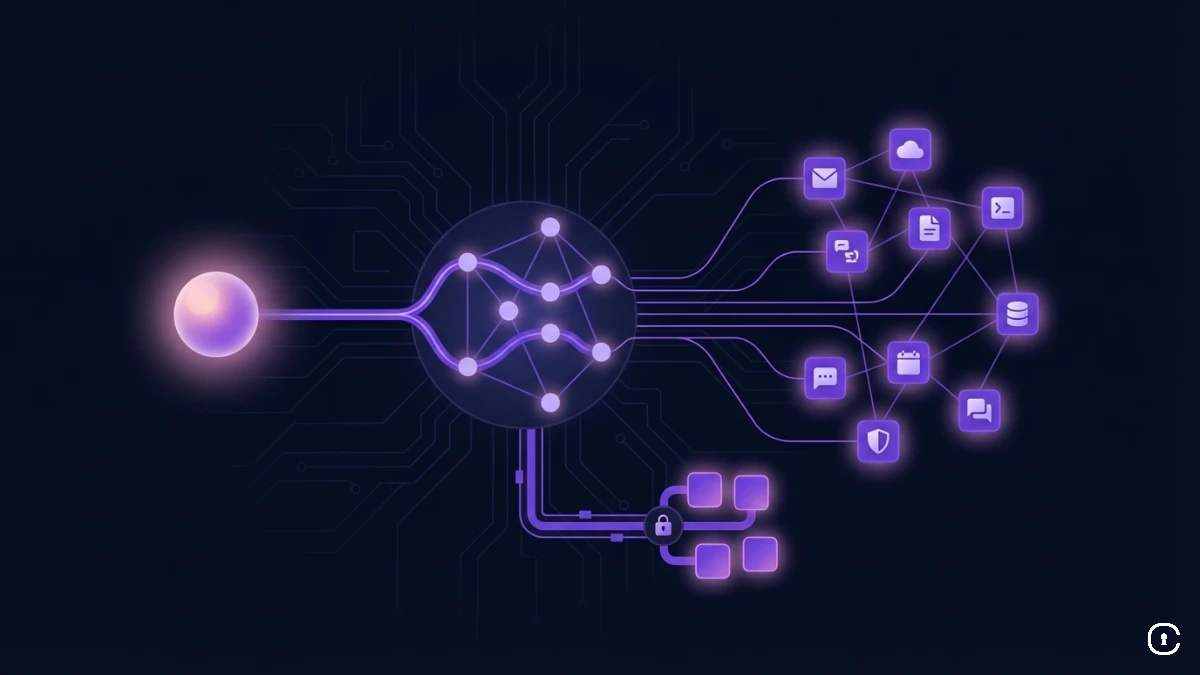

This is the main difference that agentic AI introduces in the structure of our interactions with computers. This shift is often described as intent-based interaction. Instead of instructing the system step by step, you define the goal, the conditions, and the constraints.

The concrete difference is that in a traditional workflow, every possible branch must be anticipated in advance by a human designer. In an agentic system, the model chooses which tool to call, in what order, and what to do when something does not work. In other words, it resolves uncertainty at runtime. In practice, this means the difference between opening five tabs to prep for a client meeting and asking your agent to do it for you.

Microsoft's Work Trend Index 2025, which surveyed 31,000 people across 31 countries, found that 46% of leaders already report their organizations using agents to fully automate workstreams. So this shift is not coming: It is already here for many teams.

What People Are Actually Asking For

The clearest evidence of where this demand is heading came not from an enterprise product launch, but from the open-source project called OpenClaw, which made headlines and which you have probably already heard about.

OpenClaw is a local AI agent that connects to messaging platforms, files, and apps on your computer. How come it is open source? Well, no serious company could roll this out so fast without making extreme security compromises, and indeed, security researchers at Cisco flagged real risks with some of its third-party extensions. Several major companies discouraged employees from using it.

But the desire for such a tool was like a volcano that erupted and the agent kept growing anyway, with a community that built more than 5,000 custom skills for it.

What is fascinating about this story is that it revealed a real unmet need. It proved that people are not primarily looking for better conversation with AI. They want relief from the administrative weight of their day: the inbox that never empties, the data scattered across five tools, the reporting that consumes half a morning every week. They want an AI that actually handles those things, not one that helps them handle those things faster.

We know this because of what the OpenClaw community actually built. The number one use case wasn't writing poetry, it was autonomous email triage, that is, agents capable of unsubscribing from spam, categorizing by urgency, and prepping drafts. Users built "morning briefing" agents that independently queried their Stripe dashboards, calendars, and newsletters to deliver a single summary to their phones by 8:00 AM. One user even deployed an agent to buy a car. The agent emailed multiple dealerships, negotiated against sales tactics, and saved its owner $4,200 while the owner was sitting in another meeting.

OpenClaw is a rough preview of that future, not the finished version. But it clarified something leaders should not ignore: the demand is not for smarter assistants, but for systems that remove cognitive and administrative load. The organizations that respond to that signal will design around delegation, not conversation.

The New Human Skill: Specification

Here is where it gets interesting for leaders thinking about their teams. As the cost of producing work, like code, analysis, reports, first-draft emails, collapses toward zero, the bottlenecks shift. More exactly, projects will fail less because of bad execution and will start failing because of vague human instructions.

Research presented at CHI 2025 on "Interactive Debugging and Steering of Multi-Agent AI Systems" highlights a common pattern when working with autonomous agent teams: Breakdowns in performance often stem from unclear or under-specified instructions rather than a lack of agent capability. An agent told to "make it better" will always produce "something". But is that something strategically correct? That is entirely down to how well the human defined what "better" means.

What this means is that the most valuable skill on your team is shifting. It is moving away from people who can operate tools efficiently, and toward people who can specify outcomes precisely and judge whether a result is right, not just technically complete. Less operator, more product manager. The ability to translate a vague business need into a clear, testable brief for an AI agent is becoming a core professional competency.

The U.S. Department of Labor designated AI literacy a foundational workforce priority in its February 2026 framework. Among its core competencies are directing AI effectively, evaluating outputs, and using AI responsibly — skills that extend beyond clever prompting and move closer to defining intent and exercising judgment. At its core, that is a management capability.

The Operational Risks of Delegation

Agentic AI is genuinely useful. It is also genuinely new, and the failure modes are worth understanding before you encounter them.

The first is what researchers call the productivity paradox. A ten-minute automated task is worthless if it takes a human thirty minutes to verify it. Teams that add AI generation without redesigning their review process find that verification quietly eats the time they thought they had saved. This may be the central challenge of the current moment: moving from endless pilots to measurable business value requires redesigning review, not just deploying models.

Closely related is review fatigue. When agents produce outputs faster than humans can meaningfully audit them, oversight degrades. The NIST AI Risk Management Framework cautions that overreliance on automated systems can diminish meaningful human engagement, increasing the risk of automation bias. When output volume exceeds cognitive capacity, review becomes passive confirmation rather than critical evaluation.

There is also a cost dimension that catches leaders off guard. Unlike a human who instinctively stops when a task is clearly going nowhere, an AI agent has no intuitive sense of expense. An agent stuck in a recursive loop can quietly burn through compute budget overnight. Gartner increasingly highlights cost control and ROI realization as central challenges in scaling GenAI beyond pilot phases. This is why financial circuit breakers are not a nice-to-have; they are a precondition for running agents in production.

Why Guardrails Must Be Infrastructure

Which brings us to the second, structural risk. An agent with broad, persistent access to your tools and data is a significant exposure. The answer is not to avoid agentic AI, but to insist on deterministic guardrails: hard, immutable rules enforced at the infrastructure level, not by the model's best judgment. Scoped permissions that expire after a task. Audit logs that record every action. Budget caps that cut off runaway processes before they become a problem. Without that layer, autonomy risks creating more cost than it saves.

These realities explain why orchestration layers are emerging as mandatory enterprise infrastructure. Civic Nexus sits between your AI agents and the tools they need access to — managing authorizations, enforcing budgets, and logging every action — ensuring that autonomy operates within defined boundaries of visibility and control. If you are thinking seriously about deploying agents in your business, it is worth understanding what that layer looks like in practice.

Questions Leaders Are Already Frequently Asking

Will agentic AI replace people on my team?

Not in the way most headlines suggest. The pattern in early deployments is that repetitive execution work declines while oversight, judgment, and specification work increases. Salesforce's internal Agentforce deployment handled over 2 million support requests autonomously while the human team shifted toward higher-value relationship work. BCG and MIT Sloan's survey of 2,102 leaders found 45% expect fewer middle-management layers as roles shift from execution toward oversight. The job changes, but it does not disappear.

How do I retrain my team for the new agentic AI reality?

The safest bet is to invest in specification skills before anything else. Run internal exercises where people translate a vague business objective into a precise brief: what is the goal, what are the constraints, what does a good result actually look like? Teams that can do this consistently will get dramatically better results from any agentic system. Teams that cannot will spend their time cleaning up outputs.

How do junior employees build judgment if AI does the grunt work?

This is one of the least discussed risks of the agentic shift, and one of the most consequential. Traditionally, junior staff develop domain intuition by doing exactly the work agents are now handling: first drafts, data gathering, meeting summaries, routine analysis. That unglamorous work is how people learn to recognize what "good" looks like. If it disappears, organizations risk producing a generation of technically capable employees who have never had to develop the judgment that comes from being wrong at low stakes. The fix is intentional design: put junior team members in the reviewer and auditor role alongside senior mentors, explicitly, not just as a formality. Make the evaluation of AI outputs a learning exercise, not a rubber stamp. The apprenticeship model has to be rebuilt around oversight rather than execution.

Will agentic AI save time, or just create new kinds of work?

Forrester's Total Economic Impact™ of Microsoft 365 Copilot study projected substantial ROI over a three-year period for organizations that deployed it effectively. But headline ROI figures do not eliminate the productivity paradox. The difference often comes down to a decision made early: whether the organization redesigned its review process alongside its automation. Do not just add agents. Decide in advance which decisions they can complete and which still require human sign-off.

Where do we begin with agentic AI?

Start with something bounded. A weekly report that pulls from three systems. An inbox triage routine. A meeting prep workflow. The goal is not to automate everything at once. It is to build genuine intuition for how delegation to AI actually works, where it earns trust, and where your judgment still needs to be in the loop. If you want to see what a governed, production-ready agent setup looks like, Civic Nexus is a good place to start.

Key Takeaways

- Agentic AI shifts the model from tool-based interaction (you manage every step) to intent-based interaction (you define the outcome; the agent works out the path).

- The viral interest in tools like OpenClaw is a market signal that people want AI that acts, not AI that helps them act.

- As execution costs fall, specification and judgment become the scarcest skills on any team. Train for those before anything else.

- The productivity paradox is real: AI saves time generating but can cost time verifying. Design your review process before you deploy, not after.

- Junior employees need a rebuilt apprenticeship path. Put them in the reviewer role deliberately, or risk a judgment deficit in a few years when today's seniors move on.

- Deterministic guardrails and budget caps, enforced at the infrastructure level, are what make delegation safe. Trust should live in the structure, not in the model's good intentions.

The organizations that treat delegation as an infrastructure problem and not a feature upgrade will be the ones that move fastest without losing control. That is the real competitive advantage on offer. Not the tools themselves, but the discipline to deploy them with the right structure around them.