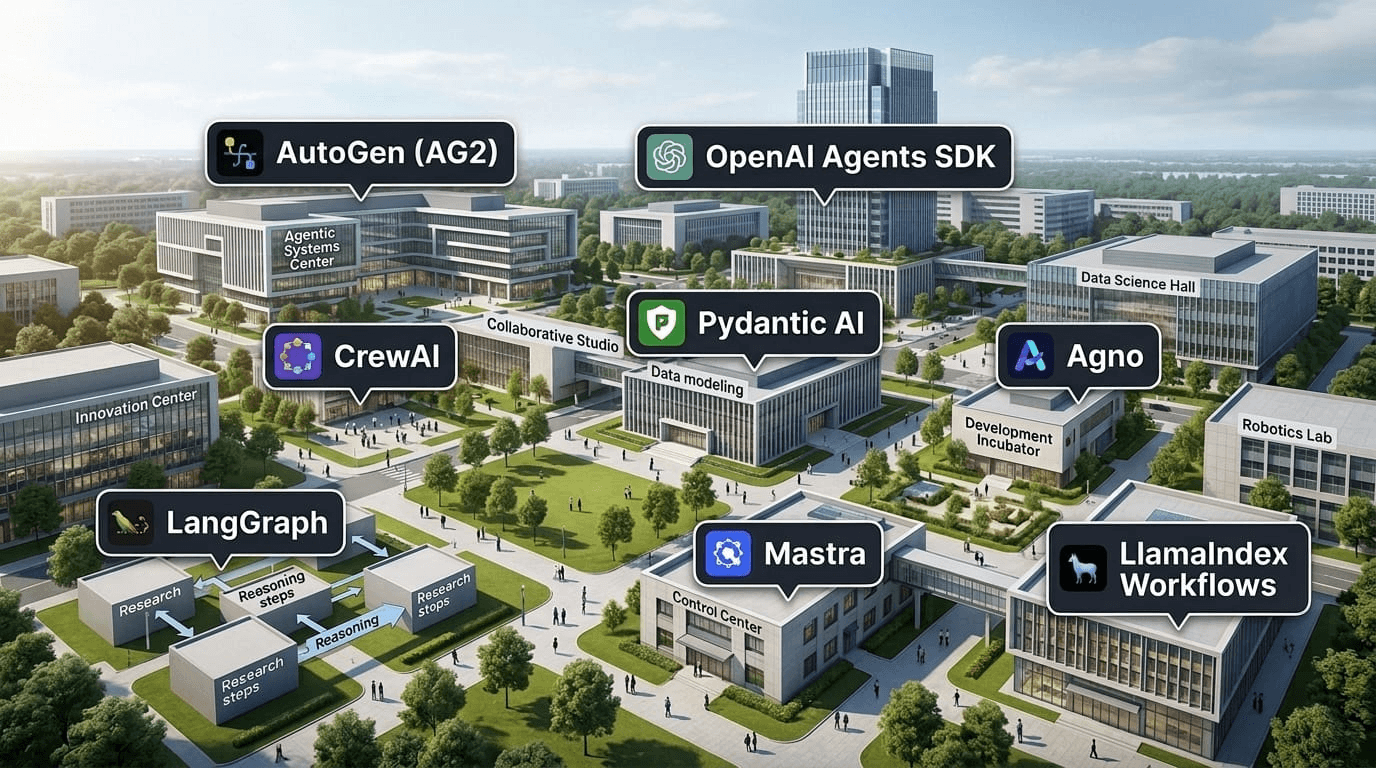

A year ago, picking an agentic framework meant choosing between "barely production-ready" and "research project with a nice README." That's changed. The frameworks covered here are mature enough for real production systems, and the differences between them are increasingly about architecture philosophy rather than stability.

This is a builder's guide. We skip the marketing summaries and go straight to what each framework actually does well, where it falls short, and when you'd pick it over the alternatives.

What makes a framework worth using

Before the list: a quick framework for evaluating frameworks.

The job of an agentic framework is to manage the loop — plan, act, observe, repeat — while staying out of your way. Good ones give you control over tool calling, memory, and multi-agent coordination without burying your logic in layers of abstraction. Bad ones make simple tasks complicated and complex tasks impossible.

The signal questions:

- How does it handle tool calls? Structured output, function calling, or prompt engineering?

- What's the memory model? Short-term context window, long-term retrieval, or both?

- Can you run multiple agents? And how do they coordinate — hierarchically, as peers, or via message passing?

- What's the escape hatch? When the abstraction breaks, how much pain is it to drop to a lower level?

With that lens, here's where each major framework lands in 2026.

LangGraph

LangGraph is the production-grade layer on top of LangChain. Where LangChain gave you chains and agents, LangGraph gives you stateful, cyclical graphs. You define nodes (tasks) and edges (transitions), including conditional edges that branch based on agent output.

What it does well: Multi-step workflows with complex branching logic. Human-in-the-loop patterns. Resumable execution — if a step fails or needs approval, LangGraph can pause and resume without losing state. It has strong support for tool calling, streaming, and checkpointing.

Where it's harder: The graph abstraction adds real cognitive overhead. Simple tasks that don't need resumability or complex branching feel over-engineered. The LangChain ecosystem underneath it has a lot of legacy surface area to navigate.

Pick this when: You're building workflows that need to pause for human approval, handle errors gracefully, or branch in non-trivial ways. Finance automation, compliance pipelines, and anything that touches sensitive data tends to fit well.

CrewAI

CrewAI is built around the metaphor of a crew — a set of agents with roles, a shared goal, and a defined process for how they hand work off to each other. You define each agent's role, goal, and tools, then tell the crew how to run: sequentially, in parallel, or hierarchically.

What it does well: Getting multi-agent pipelines running fast. The role-based mental model maps well to real team structures — researcher, writer, reviewer — and makes it easy to reason about what each agent is responsible for. Good tooling for async execution and callback handling.

Where it's harder: Less control over the underlying execution loop compared to LangGraph. When agents need to negotiate or share context dynamically (rather than passing output linearly), you start fighting the abstraction.

Pick this when: You want multi-agent coordination out of the box and don't need fine-grained control over the loop. Rapid prototyping, content pipelines, and research workflows are natural fits.

AutoGen (AG2)

Microsoft's AutoGen, now maintained under the AG2 project, is built around conversational agents. Two or more agents talk to each other to complete a task. The orchestration happens through natural language rather than hard-coded graphs or pipelines.

What it does well: Flexible multi-agent conversations where you don't know the exact structure upfront. Strong support for code-executing agents — AutoGen can write, run, and debug code in a loop. The AssistantAgent/UserProxyAgent pattern is well-understood and widely documented.

Where it's harder: Conversational coordination is less predictable than structured graphs. Latency adds up when agents are talking through multiple turns to accomplish something that could be a single function call. Production deployment is more work than with frameworks built with observability first.

Pick this when: You're building coding assistants, data analysis agents, or research pipelines where the task structure is emergent. Also strong for experimentation — it's easy to add a new agent to a conversation and see what happens.

OpenAI Agents SDK

Released in early 2025, the OpenAI Agents SDK is the production successor to Swarm. It gives you agents, handoffs (transferring control between agents), tools, and guardrails — all in a lightweight package that stays close to the OpenAI API surface.

What it does well: Dead simple setup if you're already on OpenAI models. Handoffs between agents are first-class — one agent can explicitly transfer a task to a specialist agent with context attached. Built-in tracing and a clean mental model. The guardrails system lets you validate inputs and outputs without wrapping everything in try/catch.

Where it's harder: Tightly coupled to OpenAI. Swapping in Anthropic, Gemini, or open-source models requires more work than with model-agnostic frameworks. The ecosystem is smaller and moving fast — expect API churn.

Pick this when: You're building on OpenAI and want something lighter and more explicit than LangGraph. Customer service bots, triage systems, and multi-step support workflows are natural fits.

Pydantic AI

Pydantic AI is the newest entry on this list and the one that's gotten the most attention from Python developers who care about type safety. Built by the team behind Pydantic, it applies the same philosophy — strong typing, validation, and clear data models — to agent development.

What it does well: Structured outputs that are actually validated. Your agent either returns what you asked for or it throws an error you can handle. Model-agnostic from day one. The developer experience for Python engineers is excellent — it feels like writing normal Python, not fighting a framework.

Where it's harder: Still maturing. Complex multi-agent coordination patterns are less first-class than in LangGraph or CrewAI. If you need production-grade resumable workflows today, you'll be rolling more of that yourself.

Pick this when: You care about type safety and validated outputs over everything else. API integrations, data extraction pipelines, and any agent that needs to return structured data reliably.

Agno

Agno (formerly phidata) had a full rebrand and architectural overhaul in 2025. The pitch: a high-performance, model-agnostic framework for building agents and multi-agent teams. The headline feature is speed — Agno claims to be significantly faster at agent instantiation than comparable frameworks.

What it does well: Clean multi-modal support (text, images, audio, video) out of the box. Built-in memory and knowledge base primitives. The team abstraction is simpler than CrewAI for many use cases. Good observability tooling built in.

Where it's harder: Smaller community and ecosystem than LangGraph or CrewAI. Fewer production case studies to learn from. The rapid evolution means patterns that worked six months ago may need updating.

Pick this when: You need multi-modal agents or a framework that handles memory and knowledge retrieval natively. Also worth looking at if raw performance is a priority.

Mastra

Mastra is the TypeScript-native option on this list. Built for teams that work in Node.js environments, it brings LangGraph-style workflow graphs to JavaScript with first-class support for tool calling, RAG, and multi-agent systems.

What it does well: Everything LangGraph does, but in TypeScript. Strong integration with Next.js and other Node.js stacks. The workflow primitive handles both sequential and parallel execution well. Good support for evals — testing your agents before shipping them.

Where it's harder: The Python ecosystem for AI is still larger and more mature. If your team works in both languages, you'll maintain two different agent stacks or commit to one. Documentation is still catching up to the feature surface.

Pick this when: Your stack is TypeScript-first and you don't want Python in your deployment. Full-stack teams building AI into existing Next.js or Node.js applications will find it integrates naturally.

LlamaIndex Workflows

LlamaIndex built its reputation on RAG — retrieval-augmented generation — and Workflows extends that foundation into full agentic execution. If your agent needs to reason over large document sets, LlamaIndex's data connectors and indexing primitives are still the best in class.

What it does well: Knowledge retrieval at scale. Workflows adds event-driven orchestration that can handle long-running tasks with async execution. If your agent's job is "find the right information and do something with it," the entire stack is optimized for that path.

Where it's harder: Less natural fit for agents that don't need heavy retrieval. The framework's origins in RAG mean the abstraction layers can feel like overhead for task-focused agents.

Pick this when: Your agent is primarily working with unstructured data — documents, PDFs, wikis, knowledge bases. Legal research, enterprise search, and compliance workflows built around document retrieval are strong use cases.

How to choose

The short version:

- Complex branching workflows that need to pause or recover: LangGraph

- Multi-agent pipelines you want running fast: CrewAI

- Conversational agents and coding assistants: AutoGen

- OpenAI stack, want something lightweight: OpenAI Agents SDK

- Python with validated structured outputs: Pydantic AI

- Multi-modal or performance-critical: Agno

- TypeScript-native stack: Mastra

- Heavy document retrieval: LlamaIndex Workflows

Two things that matter more than framework choice: what model you're using, and what tools your agents can reach. A well-connected agent on a mediocre framework will outperform a framework-perfect agent that can't access the right data.

That's where MCP comes in. Whatever framework you pick, routing your tool access through a proper MCP layer gives you security, observability, and permission controls that no framework handles natively. The framework handles the loop. The tool layer handles what the agent can actually touch.

If you're wiring agents into production systems, Civic Nexus handles the tool connectivity, auth, and guardrails — regardless of which framework you're building on top of.